How Deep is your… Neural Network? How deep should it be?

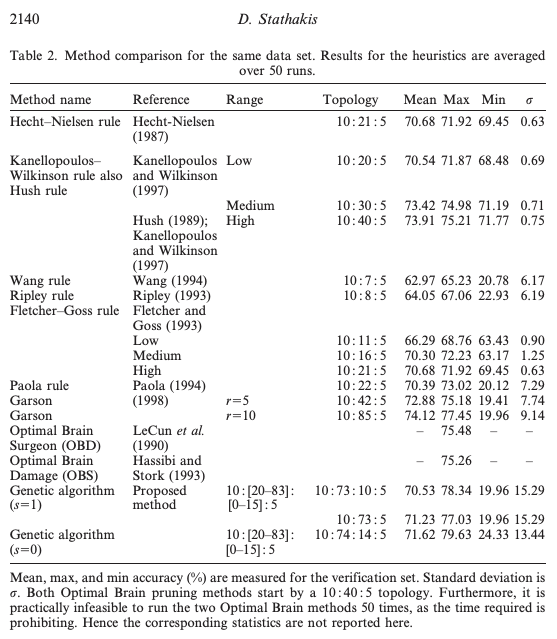

How many hidden layers? How deep should your neural network be? How large or deep a fully-connected neural network can or should be? All good questions, here we explore some answers. This book’s chapter takes the cake for how large or deep a fully-connected neural network can or should be: At present day, it looks …

How Deep is your… Neural Network? How deep should it be? Read More »